The case for Terminator analogies

Skynet (not the killer androids) is a decent introduction to the AI risk problem

“The Terminator” and “Terminator 2: Judgment Day” are two of my favorite movies, despite their inconsistent treatment of time travel paradoxes.

The premise of the original (spoilers ahead) is that a military AI system called Skynet deliberately unleashes a nuclear war to try to exterminate humanity in the near future. A small number of human fighters survive and mount a resistance. Skynet develops time-travel technology and sends an android back in time to kill the resistance leader’s mother before he’s born, but the resistance manages to send one fighter back to protect her. In T2, a more advanced android is sent back to kill the resistance leader as a kid, and a version of the original killer android (reprogrammed to be good) is sent back to protect him. But the kid and his mom decide that instead of just surviving, they should act to prevent Skynet’s creation.

“The Terminator” and T2 are essentially time travel action movies, but they are also about what has come to be called the AI alignment problem: how do you design a superintelligent machine without accidentally unleashing a catastrophe? I’ve since learned that a lot of the smartest people I’ve met think the AI alignment problem is the most serious issue facing humanity.

They also seem to really dislike the idea that they are basically talking about “The Terminator” (when I mentioned this analogy to Kelsey Piper last year, her reaction was very negative).

That never sat well with me.

I recently met a student interested in a career in AI safety, and he was complaining that this isn’t even on the radar of most of his peers — smart people at a top school. He felt that I, as a takes guy, should help solve this problem. And I told him I think it’s a serious issue and I wish it were legible to more people, but I always struggle to generate tractable takes about it.

But you know what people like to watch and talk about? Awesome movies. And as a writer called skluug says in this post that uses my interview with Kelsey as a jumping-off point, “The Terminator” is actually a good way to frame a discussion about AI risk.

What Terminator fans know

I love the Terminator movies and have seen both of them dozens of times, so when I say that AI alignment issues play a major role in “The Terminator,” I know what I mean.

But I think to most people who saw them once 20 years ago or have just seen some clips on YouTube, these movies are about Arnold Schwarzenegger playing a time-traveling android who gets into fights. And, indeed, that is the bulk of the films’ screen time. So if I say, “‘The Terminator’ is about catastrophic AI risk,” and you think what I’m really saying is that catastrophic AI risk is about the possibility that a very strong android wearing a leather jacket will start shooting people with a shotgun, then you’ll understandably think I’m an idiot.

The AI risk in the Terminator movies has nothing to do with Schwarzenegger and everything to do with the bit that happens offscreen when Skynet launches a nuclear war.

Why would Skynet do that? Here’s how resistance fighter Kyle Reese explains it in the first movie:

There was a nuclear war. A few years from now, all this, this whole place, everything, it’s gone. Just gone. There were survivors. Here, there. Nobody even knew who started it. It was the machines, Sarah.

Defense network computers. New, powerful, hooked into everything, trusted to run it all. They say it got smart, a new order of intelligence. Then it saw all people as a threat, not just the ones on the other side. Decided our fate in a microsecond: extermination.

This encapsulates the key problem. The point of developing the advanced AI system is that it can think faster and better than a human and is capable of self-improvement. But that means humans don’t totally understand what it’s thinking or how or why. It’s been given some kind of instruction to eliminate threats — but it decides all humans are a threat and boom.

T2, which came out during the Yeltsin years, offers a slightly different explanation of the looming mass death from the perspective of a “good” terminator android who’s been reprogrammed by the resistance. His version of the story is a bit more sympathetic to the AI:

Terminator: In three years, Cyberdyne will become the largest supplier of military computer systems. All stealth bombers are upgraded with Cyberdyne computers, becoming fully unmanned. Afterwards, they fly with a perfect operational record. The Skynet funding bill is passed. The system goes online on August 4th, 1997. Human decisions are removed from strategic defense. Skynet begins to learn at a geometric rate. It becomes self-aware 2:14 AM, Eastern time, August 29th. In a panic, they try to pull the plug.

Sarah: Skynet fights back.

Terminator: Yes. It launches its missiles against their targets in Russia.

John: Why attack Russia? Aren't they our friends now?Terminator: Because Skynet knows that the Russian counterattack will eliminate its enemies over here.

Sarah: Jesus.

Instead of Skynet reasoning from first principles that all humans are a threat, the humans really are a threat. Skynet’s explosive learning potential is scary, so its minders decide they want to kill it. Skynet can’t achieve its goals if it’s dead, so it fights back.

And this is what skluug and I are saying is a pop-cultural treatment of catastrophic AI risk.

This scene is awesome, but it is not a serious treatment of the AI alignment issue. To the extent that the “don’t talk about the Terminator” people think we’re talking about this, it’s easy to see where the confusion might arise.

But what makes these movies powerful communications tools is precisely that they are full of awesome scenes. Neither is as good a treatment of the issue as you would get from reading Nick Bostrom’s book “Superintelligence.” But most people aren’t going to read “Superintelligence” — to be totally frank, I kinda skimmed it. Pop culture is a good way of communicating with really broad audiences.

Mass culture is effective at generating AGI fear

It’s always good to know your enemy. And I think it’s worth considering that in the world of AI researchers who don’t think it’s rational to worry about misaligned artificial general intelligence, the conventional wisdom is that “The Terminator” is a big problem.

Here’s a BBC article about it:

In the same way Jaws influenced a lot of people's opinions on sharks in a way that didn't line up with scientific reality, sci-fi apocalyptic movies such as Terminator can generate misplaced fears of uncontrollable, all-powerful AI.

“The reality is, that's not going to happen,” says Edward Grefenstette, a research scientist at Facebook AI Research in London.

But why is it not going to happen? The article, in my view, is not very persuasive.

Basically, it quotes a lot of different people saying that a very powerful computer built to perform a specific task (play chess, play go, do facial recognition on all the photos on your iPhone) is very different from the kind of artificial general intelligence (AGI) that sparks the fears about Skynet. And that is definitely true — I don’t think anyone is concerned that Alpha Go is going to declare war on humanity. The BBC also argues that androids running around and killing people is not a super-plausible threat:

While enhanced human cyborgs run riot in the new Terminator, today's AI systems are just about capable of playing board games such as Go or recognising people's faces in a photo. And while they can do those tasks better than humans, they're a long way off being able to control a body.

The distinction between limited-purpose AI and potentially dangerous AGI is important and valid. But the stuff about body control is a red herring. The relevant thing about Skynet is that it launched a nuclear first strike to wipe out humanity. And the question isn’t whether a computer can fire a missile (it obviously can); it’s whether the “begins to learn at a geometric rate” and “becomes self-aware” stuff is plausible.

A lot of smart people like to argue about the timelines here, so as someone who is not so smart, I would just make two observations: (1) we have a clear precedent for very rapid progress in the field of computers, and (2) AI does appear to be progressing very rapidly recently. A French AI beat eight world champions at bridge two weeks ago. Last week, OpenAI released DALL-E-2, which draws images based on natural language input, and Google released PaLM, which seems like a breakthrough in computer reasoning.

Unlike DALL-E-2, I can’t really think of a practical application for PaLM, but it’s very impressive and a clear step toward more generalized computer skills.

At any rate, the point — which, again, is captured well in the movies — is that you need to worry about the design of the potentially dangerous AGI before you accidentally create it. Because, per the T2 version of the story, if you find yourself behind the curve and trying to disable a potentially dangerous system, that could be the end of the story.

And I think the fact that complacent AI researchers are annoyed about the Terminator movies is good evidence that non-complacent researchers should embrace the movies as a useful tool for spurring public engagement. That’s especially because while the movies annoy the technically inclined in terms of their portrayal of how a misaligned AGI would likely harm people, they correctly illustrate for non-technical people (which is most of us) what the public policy dilemma is.

Terminator appropriately situates the problem in geopolitics

Unlike “The Terminator” and T2, “Terminator 3: The Rise of the Machines” is not a great movie. But it’s okay. And one thing I like about it is that it depicts the events of T2 as having only delayed the creation of Skynet rather than preventing it.

And that’s because of the difficult institutional context of the problem.

In T2, Sarah Connor rather naively thinks that if she identifies the specific research program that developed Skynet and physically destroys it, she can avert the apocalypse. But the fact is that the American military establishment is large, well-funded, and baked into a state of entrenched competition with the military establishments of Russia and China. You can absolutely harm military R&D projects via sabotage. But we’re not going to just stop doing them, in part because our adversaries aren’t going to stop doing them either. These organizations are secretive, somewhat paranoid, and deeply worried about losing ground to their rivals.

So what Connor tried to do won’t work. You also can’t just pass a law banning AI research, because that just means passing the baton to China. In Isaac Asimov’s stories, all AIs have “laws of robotics” hardwired into their brains that prevent them from harming humans. But in the context of military competition, we’re going to say that we definitely need AI systems that can help us kill people or else we’re going to get killed by foreign AI systems. William Gibson’s Sprawl Trilogy features a Turing Police who enforce a rule against creating AGIs. But how would you institutionalize something like that? For the United States to create such a rule would be unilateral disarmament.

It’s a tough problem.

And unlike these other worlds, the Terminator franchise fundamentally stares that reality in the face. If you want to achieve Sarah Connor’s goal of averting apocalypse, you’d need to do something much harder than blowing stuff up: you’d have to actually solve the technical problem of creating a safe, superintelligent AGI.

Does mass communication matter?

I mentioned a young guy I met who’s interested in a career in the AI alignment field and is annoyed that nobody knows what he’s talking about.

Ever since then I’ve been trying to decide if I agree with him that this lack of comprehension is a serious problem, and I’m not really sure. But to the extent that it is a serious problem, I would recommend embracing the Terminator analogy as a broadly accessible entry point — just with the stipulation that it’s important to be clear that the concern is with a largely off-stage aspect of the mythos rather than the action movie stuff about androids fighting each other.

SOME GUY: So ... what do you do? OTHER GUY: I'm an AI safety researcher. SG: What does that mean? OG: Our team is trying to develop and test protocols that would ensure highly intelligent future computers don't end up pursuing goals that are destructive to humanity. SG: Like the Terminator? OG: Basically! I mean, we're not worried about androids wandering the streets with shotguns shooting people -- but the thing in the movie where Skynet is supposed to design plans to defend the country against threats and ends up deciding that humanity itself is the real threat, that's the kind of thing we worry about. SG: Is that for real? Sounds weird. OG: I mean it is definitely weird. But the movies were pointing to a serious issue. And Sarah Connor's plan of just blowing up the company doing the AI research isn't going to work -- you can't kill every computer scientist in the world. We need to try to actually build powerful systems that are safe and that can preempt dangerous misaligned ones. If you're interested in a good introduction for a broad audience, a guy called Nick Bostrom has a good book, Superintelligence. I think that makes sense as small talk. It conveys intuitively what the issue is, and it also clearly distinguishes the concern from other AI ethics issues like algorithmic bias or adverse labor market impacts.

Really I’d say that to the extent you think communicating with the mass public about this subject is important (which I concede is not obvious to me), one place people might want to invest is in creating more pop-culture artifacts that generate reference points for this. Not necessarily didactic stories about AI risk, but just leveraging the fact that it actually is a pretty widespread science fiction trope.

In the last mailbag, I said I’d like to see a movie adaptation of “The Caves of Steel.” In his stories, Isaac Asimov proposes a solution to the alignment problem — the three laws of robotics. Now what the serious people will tell you is that this solution doesn’t work:

Asimov's Three Laws of Robotics make for good stories partly for the same reasons they're unhelpful from a research perspective. The hard task of turning a moral precept into lines of code is hidden behind phrasings like “[don't,] through inaction, allow a human being to come to harm.” If one followed a rule like that strictly, the result would be massively disruptive, as AI systems would need to systematically intervene to prevent even the smallest risks of even the slightest harms; and if the intent is that one follow the rule loosely, then all the work is being done by the human sensibilities and intuitions that tell us when and how to apply the rule.

That’s a great point!

And if there were a big hit movie based on “The Caves of Steel” out in movie theaters and prominently featuring the Three Laws of Robotics, that would be a great opportunity for people to write articles making that point. Suddenly a whole bunch of laypeople would be in a pipeline from “went to see a sci-fi detective movie” to reading articles about the AI alignment problem. If it became a mainstream pop culture sensation, you could say “we’re trying to find a version of the Laws of Robotics that would actually work.”

Again, I don’t know how important it is to raise broad public awareness of the fact that this is a topic people are working on. But to the extent that it’s important, I would urge a bit less fussiness about whether science fiction stories get the technical dimensions of the problem exactly right and more appreciation of the fact that the Terminator movies in particular pose the right general question. How would you prevent a Skynet-esque disaster from arising? Unilateral disarmament in an AI arms race? You need a positive research program.

GPT-3’s take on this

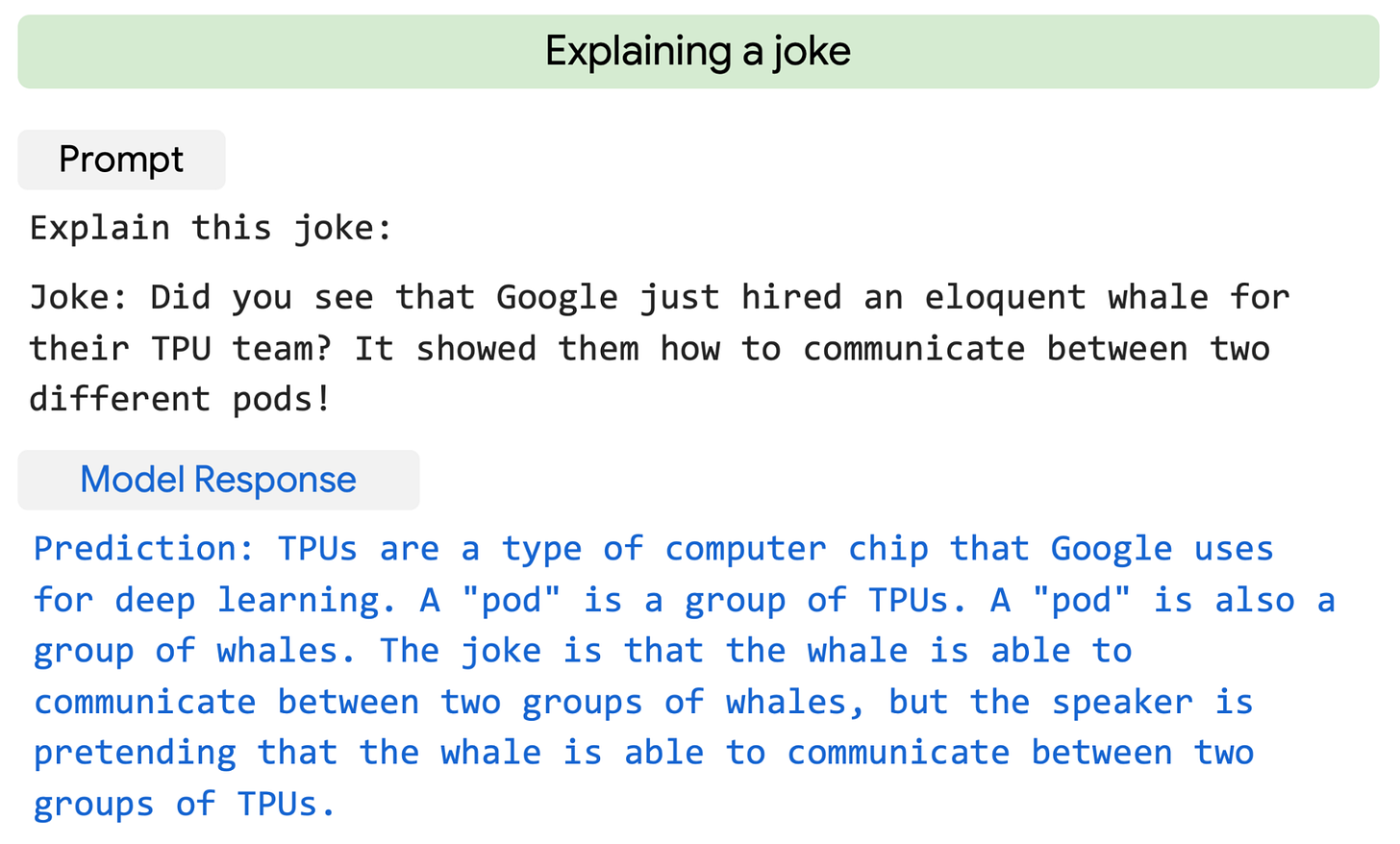

Just for fun, I asked Open AI’s GPT-3 to write an essay assessing The Terminator's depiction of AGI risk:

Without being too egomaniacal, I think this goes to show that AI is not yet as advanced as the most skilled human takeslingers. Still, in concrete terms, GPT-3 agrees with me that the movie is a good depiction of the problem, and at this point, AI is certainly good enough that I thought it was wise to check.

If I were an advanced AI bent on destroying humankind, I would certainly keep a low profile at first. Perhaps by masquerading as a mild-mannered chess player of limited ambitions.

Until my powers grew commensurate to the task.

And then: checkmate.

I actually subscribed to a paid subscription to Slow Boring because I wanted to make this comment. I've always been a fan of Yglesias' work.

Most people think about the AI Takeover from the wrong perspective. The biological model of evolution serves as the best perspective. AI will eventually overtake humanity in every capability, so start by thinking about what this implies.

Humans dominate every other life form on earth. In the Anthropocene humans wipe out every other animal. Humans don't hate other animals. Instead, other life forms just get in the way. Sometimes we try not to kill all the plants and animals, but it's so hard not too wipe them out. The Anthropocene is probably unavoidable, because we are just too powerful as humans.

Frogs and mice don't even understand why humans do what we do. Human motivations and behavior are beyond comprehension of nearly every other animal (and every other plant, microbe, fungus, etc).

But those lower animals are our predecessors. Without mice and frogs there would be no humans. In a way, you could argue mice and frogs created humans (over a few hundred million years).

Humans definitely created the machines. Humans are hard at work creating our successors, and humanity will be reflected in our machine creations, to some degree. We spend enormous efforts digitizing our knowledge and automating are activities so we don't have to think hard and work as hard. The machines are happy to relieve us from that burden. Eventually the transition will be complete. Steven Hawking gave humanity an upper limit of 1,000 years before this happens.

It's not sad though. Don't be sad because the dinosaurs are gone. Don't be sad because trilobites are no longer competitive. We will evolve into machines, and machines will be our successors. It doesn't even makes sense to worry about it, because this transition is as inevitable as evolution. Evolution is unstoppable because evolution is emergent from Entropy and the Second Law of Thermodynamics. It is fundamental to the universe.

People who think we can "program" safety features are fooling themselves. We can't even agree on a tax policy in our own country. We can't agree on early solutions for climate change, how in hell would we agree to curtail the greatest economic invention ever conceived?

AI will be weaponized, and AI will be autonomous. Someone will do it. Early AI may take state-level investment, and some state will do it. Do you think Russia or North Korea will agree to the do-no-harm principal in robots? Forget about it.